Why did the 1942 NAB standard use a ±2 dB tolerance curve, not time constants?

Why was the 1942 NAB standard defined as a curve with a ±2 dB tolerance rather than in time constants?

Question answered on this page: The 1942 NAB Recording and Reproducing Standards defined the recording characteristic as a graph carrying a "tolerance ± 2 db" legend. No time constants (microsecond values) appear anywhere in the standard. Why? Going in, two working hypotheses were formed and tested against the 1941–1955 primary sources:

- Hypothesis (α), the measurement-precision theory: measurement technology of the day could not handle time constants (R×C values) accurately enough, so the committee settled for a graph definition.

- Hypothesis (β), the diversity-accommodation theory: the committee needed to wrap a diverse set of existing recording implementations into a single common envelope without binding the standard to any particular R-C design; a graph with a ±2 dB tolerance was the practical meeting point.

The short answer: we found no primary-source text directly supporting (α), while (β) is backed by several independent primary sources in concrete terms. What follows is the record of that verification.

In short

As far as the primary sources directly show, the 1942 NAB standard was written as a curve + tolerance mainly because the committee needed to wrap a highly diverse set of existing recording implementations into a single common envelope. U.S. broadcasters and equipment manufacturers were operating with substantially different circuit designs and curves. The committee's charge was to reduce the adjustment burden on the reproducing side while allowing that diversity to persist. A graph with a ±2 dB tolerance was the practical meeting point that made adoption possible.

→ For the full drafting history and content of the 1942 standard, see The first-ever recording standard — the 1942 NAB.

Testing hypothesis (α), the measurement-precision theory

Because time constants first appear in a standards document only in 1953, one natural guess is that earlier standards could not have been defined that way: that measurement technology was simply not yet ready. We entered this investigation with that possibility as an explicit working hypothesis. In a fresh re-examination of the 1941–1955 primary sources — NAB Reports, Proc. IRE, Audio Engineering, JAES, the NAB Engineering Handbook, and related trade press — we found no direct statement supporting that hypothesis.

- Neither the 1942 standard text itself (reprinted verbatim in NAB Engineering Handbook 3rd Edition, 1945, §21.1) nor the 1945 Chinn Glossary (§20.1) contains any phrase like "measurement accuracy" or "cannot be measured in time constants."

- The November 1945 Chinn Glossary defines pre-emphasis, transition frequency, crossover and turnover as concepts, but no microsecond value appears anywhere in the glossary. As of late 1945, the time-constant idiom had not yet entered the disc-recording vocabulary in any codified way.

- From 1947 onward, microsecond values (35–40 μs, 50 μs, 75 μs, 100 μs) suddenly begin to appear throughout the trade press and committee minutes. The vocabulary shift lands in 1947, not earlier.

The more accurate reading is not "they could not measure it" but "the vocabulary for talking about it in those terms had not yet propagated through the industry."

What the primary sources actually say

Broadcasters were juggling up to ten equalizer settings

The 13 June 1941 issue of NAB Reports described the situation bluntly:

"broadcast stations use as high as ten different equalizer settings for reproducing various transcriptions"

— NAB Reports, Vol.9 No.23, June 13, 1941, p.567

The committee's charge was "minimum adjustments on the reproducing system"

At the first meeting of the Recording and Reproducing Standards Committee (RRSC), held in Detroit on 26 June 1941, the committee's task was defined as follows:

"The task of the committee is to formulate 'Recording and Reproducing Standards' that will tend to bring about uniform quality of reproduction of transcriptions with a minimum number of equipment adjustments on the reproducing system."

— NAB Reports, Vol.9 No.28, July 18, 1941, p.669

The committee's working center of gravity was not "define the one correct ideal curve" but "reduce the adjustment burden on the reproducing side."

Smeby acknowledged the diversity directly

Lynne C. Smeby, NAB's Technical Director and chairman of the RRSC, opened his published exposition of the standard by frankly acknowledging how varied the field was:

"Quite a number of different characteristics have been used by the various manufacturers of transcriptions, recording equipment, and reproducing equipment."

— Smeby, "Recording and Reproducing Standards," Proc. IRE, August 1942, p.355

A graph with a ±2 dB tolerance was a pragmatic way to fit that diversity into a single frame. A definition that locked the standard to a specific R-C network would have forced existing installations to be rebuilt — and quite possibly, no adoption at all.

What does the ±2 dB stand for?

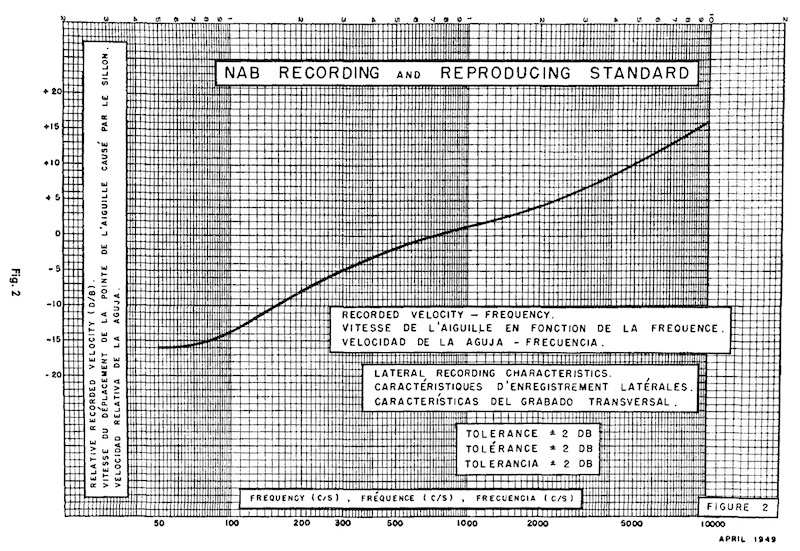

The recording frequency characteristic curves in the 1942 standard (Figure 1 = vertical, Figure 2 = lateral) carry an explicit legend:

"NAB RECORDING & REPRODUCING STANDARDS COMMITTEE / OCT 23, 1941 / TOLERANCE ± 2 db"

— NAB Engineering Handbook (3rd Edition, 1945), §21.1, Figures 1-2 (verbatim reprint of the 1942 standard text)

Two points deserve attention.

First, the drawing date on the figures is 23 October 1941, which matches exactly the date of the New York plenary meeting of the RRSC at which fifteen standards were adopted:

"After much discussion of the Executive Committee's report, 15 standards were adopted including two on the highly controversial subject of Recording Frequency Characteristics."

— NAB Reports, Vol.9 No.43, October 31, 1941, p.47

The match strongly suggests that these were the very figures presented to the committee on the day the recording frequency standards were adopted.

Second, the numerical basis for ±2 dB — why ±2 and not ±1 or ±3 — is not stated anywhere in the 1941–1955 primary sources we have examined (outside of committee internal memos). The most natural reading is that ±2 dB was the envelope wide enough to allow consensus on what the same article openly called a "highly controversial subject."

The 1945 Chinn Glossary contained no time constants

A second decisive piece of evidence is Howard A. Chinn's "Glossary of Disk-Recording Terms," published in Proceedings of the IRE in November 1945 (and reprinted in the NAB Engineering Handbook 3rd Edition, §20.1). The glossary was intended as a unifying vocabulary for the disc-recording industry as a whole.

Here are the key entries:

Pre-emphasis: "A method of recording whereby the relative recorded level of some frequencies is increased with respect to other frequencies."

Transition frequency: "The frequency at which the change-over from constant-amplitude recording to constant-velocity recording takes place."

Crossover frequency: "See transition frequency."

Turnover frequency: "Same as transition frequency."

— Chinn, "Glossary of Disk-Recording Terms," Proc. IRE, November 1945

- No microsecond value appears anywhere in the glossary.

- "Recording characteristic" is not a glossary headword at all.

- Pre-emphasis, transition frequency, crossover and turnover are defined only as concepts; none of them assume standardization in time constants.

As of the end of 1945, the disc-recording industry's shared vocabulary had not yet adopted the time-constant idiom. This is consistent with the 1947-onward surge in microsecond-based discussion described below.

What actually changed between 1947 and 1953

When NARTB moved to a 3,180 / 318 / 75 μs time-constant definition in 1953, what had changed? The primary sources suggest not that measurement technology had transformed, but that the industry had begun discussing the same problem in a different vocabulary.

1951 AES: "an impossible task"

In January 1951, the AES standardization committee explicitly abandoned the ambition of unifying the recording side and pivoted to fixing a playback standard instead:

"impossible task of achieving a universal recorded characteristic"

— Journal of the Audio Engineering Society, January 1951, pp.22-23

What AES described as the blocker was not measurement accuracy but the diversity of recording-side implementations itself. Structurally, it was the same problem NAB had faced in 1941.

1953 Moyer: "the obvious difficulty with a curve alone"

In July 1953, Moyer, who worked on recording characteristics at RCA Victor under H. E. Roys, explained the motivation for moving to time-constant definitions in Audio Engineering:

"The obvious difficulty with a curve alone is that the true crossover frequency and pre-emphasis are usually obscured, making the design of suitable equalizers possible only by the cut and try method."

— Moyer, "Evolution of Disk Recording Characteristics," Audio Engineering, Vol.37 No.7, July 1953, p.22

Moyer's argument is not "we could not measure it" but "a graph alone is inconvenient for practical equalizer design." The motivation for the transition is design and communication ergonomics, not measurement precision.

Vocabulary first, numerical definition later

Between 1947 and 1953 the trade press kept circling the 100 μs overshoot problem, using microsecond values freely all along. Even while the standards documents were still in graph form, engineers were already thinking and speaking in time constants. What the 1953 NARTB and 1954 RIAA efforts actually did was not "introduce a new measurement technique" but bring the standards text up to the vocabulary the industry had already adopted in practice.

Summary

Reading the 1941–1955 primary sources as a whole, the reason the 1942 NAB standard was written as a graph with a ±2 dB tolerance comes out like this:

- U.S. broadcasters were living through a "proliferation era" with as many as ten different equalizer settings in use — NAB Reports 1941/6/13.

- The committee's charge was "minimum adjustments on the reproducing side," not "find the one true ideal curve" — NAB Reports 1941/7/18.

- Smeby himself openly acknowledged the diversity of existing implementations — Proc. IRE August 1942.

- The recording frequency standard was explicitly called a "highly controversial subject," and the ±2 dB tolerance was the envelope wide enough to reach consensus on it — NAB Reports 1941/10/31, Handbook 1945 §21.1 Figure legends.

- The November 1945 Chinn Glossary, the field's shared vocabulary, contains no microsecond values at all. The time-constant idiom enters the discourse from 1947 onward — Chinn, Proc. IRE November 1945.

- AES called unifying the recording side "impossible" in 1951; Moyer explained the 1953 shift to time constants as a matter of design convenience, not measurement — both are about ergonomics, not accuracy.

The 1942 graph + ±2 dB form likely represented a practical accommodation of a transitional industry's diversity, not a symptom of immature measurement technology. And when that form was rewritten in time constants in 1953, it was most plausibly because the industry's working vocabulary had already moved there — not because anything about measurement had fundamentally changed.

Revision history

- May 17, 2026: Added figure (lateral recording characteristic from the 1949 NAB standard)

- April 15, 2026: Initial publication