(In a Nutshell Part 1) Why wasn't there a unified standard from the start? — From the birth of electrical recording (1925) to the NAB standard (1942)

In a Nutshell top | Part 1 | Part 2 →

Why wasn't there a unified standard from the start?

The history of phono EQ curves begins in 1925. Yet the concept of a "standard" would not appear for another seventeen years. Why did it take so long? The answer lies in the very nature of the technology itself.

For reference, the RIAA recording and playback characteristic—which would eventually become the unified standard—is a curve defined by three time constants: turnover (the frequency at which low-frequency amplitude reduction begins), treble pre-emphasis (boosting high frequencies during recording and restoring them during playback to reduce noise), and bass shelf (boosting very low frequencies during recording and attenuating them during playback to reduce rumble and the effects of disc warping). During playback, the inverse of this characteristic is applied. The differences among the various historical curves can be understood as differences in the presence or values of these three parameters.

1. The physical problem of cutting sound into a record

Before the invention of electrical recording, the frequency range that could be captured on a record was extremely narrow. In the acoustic recording era, it extended only from roughly 250 Hz to 2,500 Hz. The advent of electrical recording in 1925 expanded this range—down to 80–100 Hz and up to around 5,000 Hz.

This was a major advance, but it also created new problems.

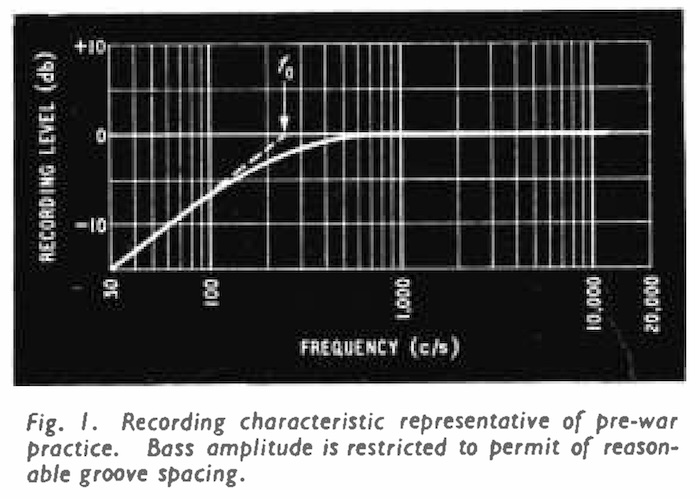

The low-frequency problem: Record grooves swing laterally in proportion to the amplitude of the sound. At a given volume, the lower the frequency, the greater the displacement. With electrical recording's extended bass range, low-frequency grooves risked colliding with adjacent grooves, requiring wider groove spacing. This drastically reduced the playing time per side.

(For reference: according to O.J. Russell's article "Stylus in Wonderland" in the October 1954 issue of Wireless World, a 12-inch 78 rpm disc could typically hold about five minutes per side, but "it would have a possible playing time of less than a minute with an unattenuated bass characteristic.")

The high-frequency problem: Conversely, at high frequencies the displacement becomes so small that the signal is buried in the noise of the disc surface (surface noise). In 1925, the upper recording limit was around 5,000 Hz, so this was less of a concern than the bass problem; however, as recording technology advanced and the upper limit extended, treble too required countermeasures.

2. The solution born from the world's first electrical recording

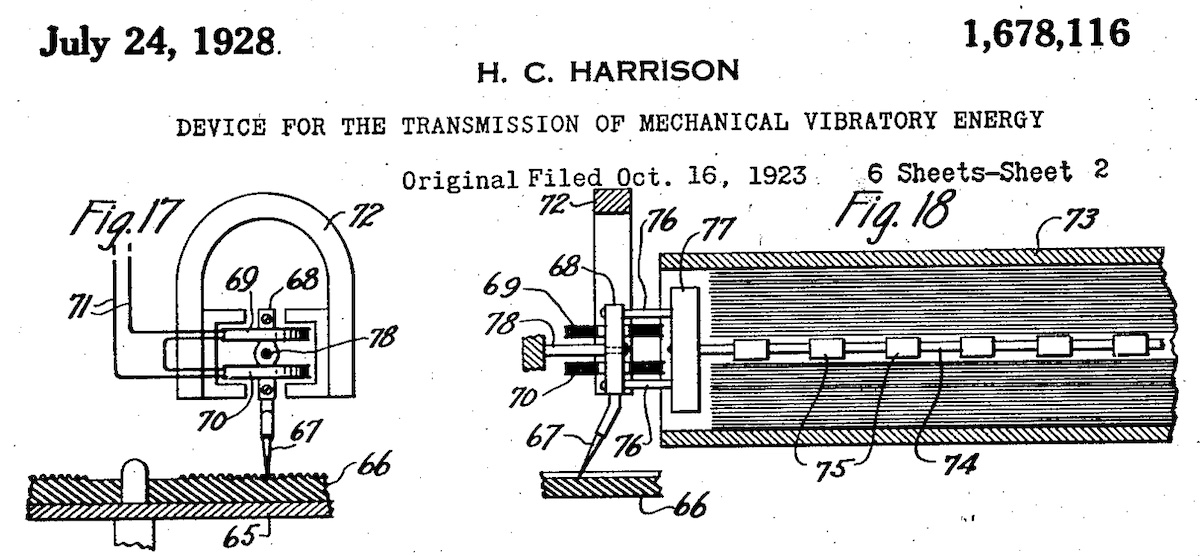

The engineers who solved this problem were J.P. Maxfield and H.C. Harrison of Bell Telephone Laboratories (Bell Labs).

Their electrical recording system, put into practical use in 1925, was designed as follows:

- Below a certain frequency (low range): Record with amplitude reduced in proportion to frequency (constant-amplitude characteristic). Restore the reduction by amplification during playback.

- Above that frequency (mid to high range): Record without modification (constant-velocity characteristic).

The frequency at which this transition occurs is called the "turnover frequency." In the earliest electrical recordings, the turnover was set at around 250 Hz (contemporary technical documents specified it loosely as "around 200–300 Hz").

The EQ curve was not a "circuit" — it was the physics of the machine

This is the key to understanding why standardization proved so difficult.

Modern phono equalizers use electronic circuits to control EQ curves with precision. But in 1925, no such circuits existed.

What Maxfield and Harrison designed was a mechanism called a "rubber-line recorder." It was a mechanical vibration transmission system using rubber elements, applying the principles of transmission lines from telephone engineering. The EQ curve characteristic was physically determined by the material properties of the components—their composition, shape, and mass.

In other words, the curve was not something you "designed" — it was something that emerged from the physical nature of the machine.

On the playback side, compensation was initially handled by acoustic devices. Victor's Victrola Credenza (a gramophone) achieved bass amplification matched to the electrical recording characteristic through ingenious design of its logarithmic horn (see Pt.1).

3. Late 1920s–1930s: technological evolution and changing curves

From 1925 onward, electrical playback equipment (electric gramophones) began to spread. Brunswick's Panatrope (c. 1925–26), Columbia's Kolster, Victor's Electrola… As the transition from acoustic to electrical playback progressed, the compensation on the playback side shifted from mechanical horns to electronic circuits.

This change also affected the handling of EQ curves.

The turnover frequency moved upward: By the early 1930s, Columbia (c. 1930–31) and RCA Victor (c. 1932) had raised their turnover frequencies from around 250 Hz to approximately 500 Hz, as confirmed by various contemporary sources (see Pt.3).

Why the increase? Raising the turnover means extending the frequency range over which bass amplitude is reduced. Improvements in cutterheads and microphones had made it possible to record lower frequencies, so raising the turnover was a practical way to keep groove displacement under control and achieve stable recording.

However, this required corresponding adjustments on the playback side. The bass attenuated during recording had to be boosted back during playback. In the United States, electrical playback equipment had already become widespread, so this adjustment could be implemented simply by modifying the circuit constants of the playback amplifier.

In Britain and Europe, the situation was different. Acoustic gramophones remained the dominant playback medium, including in colonial markets. Raising the turnover would have left listeners without a means of compensating for the reduced bass—resulting in thin-sounding playback. For this reason, Britain and Europe maintained a turnover around 250 Hz until the early 1950s. (From this point on, we will focus on the US story.)

Each company evolved independently: Yet none of these changes followed any industry-wide standard. Curves were determined by each company's cutterhead and recording amplifier designs. Even RCA Victor and Columbia had subtly different characteristics.

Why didn't they unify? More precisely, there was little awareness that unification was even necessary.

In this era, the concept of "high-fidelity reproduction" simply did not exist for consumer records. The signal-to-noise ratio of a shellac disc was roughly 30 dB at best, and playback required a steel needle tracking at hundreds of grams. In terms of sound quality, records lagged behind radio broadcasting and optical sound recording for motion pictures.

Record company advertising emphasized the appeal of artists and entertainment, not sound quality. Victor spent lavishly on advertising and prominent musicians, marketing the Victrola as a fine musical instrument and a worthy addition to the most elegant of home living rooms and parlors.

Under these circumstances, it was entirely normal for listeners to adjust their tone controls to achieve a sound that was "pleasing enough." Moreover, technology was still evolving rapidly—there was little incentive to freeze the current state of the art into a standard. But from the world of radio broadcasting—where sound quality already surpassed records—the first "standard" would emerge.

4. Broadcasters created the first "standard" (1942)

The field that felt the need for standardization most acutely was not the consumer record industry. It was radio broadcasting.

Radio stations exchanged program material on "transcription discs" (professional 16-inch discs). Since the equipment at the originating station and the receiving station differed, inconsistent recording and playback characteristics created real problems.

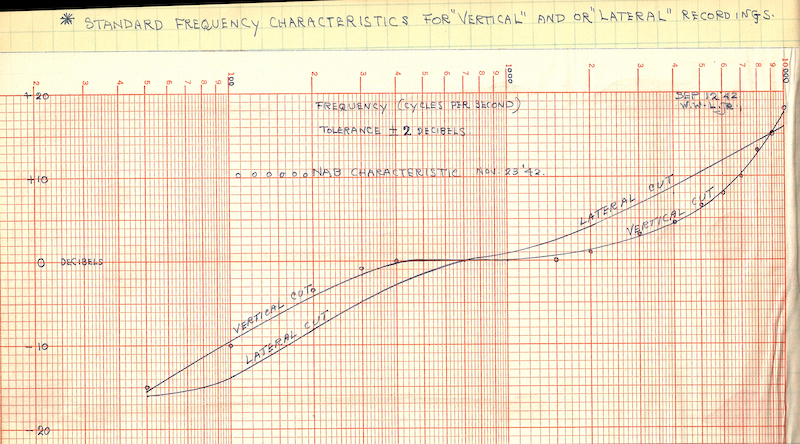

In May 1941, the National Association of Broadcasters (NAB) initiated a standardization effort. Deliberations continued through the entry of the United States into the Pacific War, and in May 1942, the NAB standard was formally adopted (see Pt.8).

This was the first disc recording standard ever formally established by an industry body.

The recording characteristic for lateral-cut transcription discs (later designated "500B-16") was adopted as the standard. The turnover frequency was 500 Hz (equivalent to a time constant of 318 μs), and treble pre-emphasis was +16 dB at 10 kHz (equivalent to a time constant of 100 μs). A bass shelf was also included in the standard to address rumble and disc warping specific to 33⅓ rpm discs.

But it did not apply to consumer records

Crucially, the 1942 NAB standard was a standard for broadcast transcription discs and did not apply to consumer records.

The NAB standards committee expressed an intention to eventually link the transcription disc standard with a consumer record standard, but in the wartime climate, the effort was limited to the minimum necessary scope.

Consumer 78 rpm records continued to use whatever characteristics each label and studio saw fit. On the other hand, as noted earlier, the concept of "high-fidelity reproduction" had not yet taken hold among ordinary consumers, so apart from a small number of enthusiasts, the lack of standardization was not widely perceived as a problem.

Standardization for consumer records would not arrive until the advent of microgroove discs (LPs and 45 rpm records)—twelve years later, with the RIAA standard of 1954.

Summary: why no unified standard emerged

Looking back over the period from 1925 to 1942, the answer to "why wasn't there a unified standard from the start?" becomes clear:

- EQ curves were initially determined by the physics of the machine — they were not something that could be freely designed

- Technology was evolving rapidly — there was reluctance to freeze the current state of the art into a standard

- Each company evolved independently — recording technology was proprietary, and there was no common forum for industry coordination

- "Faithful reproduction" was not a selling point — there was no concept of "high fidelity" for consumer records; the appeal lay in artists and entertainment. Listeners adjusted their tone controls as a matter of course

- The impetus for standardization came from broadcasters — and did not extend to consumer records

While a standard for broadcast use was born in 1942, standardization for consumer records would take more than another decade. In the intervening years, a pivotal development would occur—the introduction of the LP record.

On to Part 2: How did unification finally happen? →

For further reading (blog series)

- Pt.1 — The physics of constant velocity and constant amplitude; Maxfield & Harrison's electrical recording system

- Pt.2 — The earliest electrical recordings (1920–25); the first electric phonographs; radio vs. the phonograph

- Pt.3 — WE's licensing fees; the evolution of RCA Victor and Columbia recording systems

- Pt.4 — Vitaphone for talking pictures; the birth of transcription discs

- Pt.5 — Stokowski–Bell Labs collaboration; the advent of the Orthacoustic curve

- Pt.6 — Lacquer discs and cutterheads; the birth of lightweight pickups (Pierce & Hunt)

- Pt.7 — The advent and proliferation of crystal pickups

- Pt.8 — The development of the 1942 NAB standard

Revision History

- April 8, 2026: Initial publication